How spiders are used in search engine optimisation

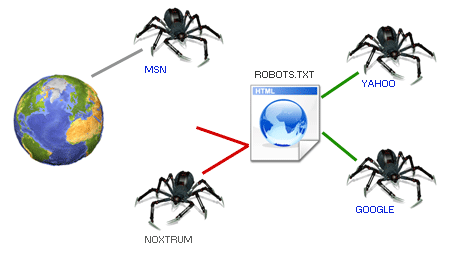

Spiders are software that is also known as web crawlers or robots. These programs are responsible for analyzing and searching in an orderly manner on websites.

The spiders are responsible for visiting the most visited and popular web pages to index them. Will follow every link of the visited website with the aim of creating a great index of all the words and locations, it also processes all the information that contains the HTML tags and attributes most relevant. Keep in mind that spiders can not index dynamic content that is created by visiting a page that contains PHP.

The spiders of the search engines track each link from one to the web and so on, being able to travel through millions of web pages on the Internet, so we must bear in mind that in order to classify a website at the top of our search engine we have to allow these robots to analyze and index a site correctly, we must emphasize that they have to use the SEO methodologies on our websites to make this happen.

Spiders keep all the information obtained from visiting millions of pages in their decentralized databases, so when a user makes a search to deliver information to users as quickly and accurately as possible. To achieve this accuracy and speed in delivering data to users, search engine companies have created data centers around the world with decentralized databases and high computing power to process information very quickly. All this computing power is necessary not only to serve users who perform a search but also because at the same time that they deliver the relevant results they make the ranking of the most popular websites.

Publicar comentario